Training on GUI-Perturbed: Why More Data Isn’t Enough

Yangyue Wang1, 2, Yash Mathur*2, Tony Zhou*2, Jinu Nyachhyon*2, Pranav Guruprasad1, 2, Harsh Sikka*1, 2

* Equal contributions. 1Fig; 2Manifold Research Group.

TL;DR

- We present analysis and experimental results examining the effects of data augmentation methods, data scale, and real versus synthetic training data in supervised fine-tuning with LoRA.

- We release a fine-tuned 7B GUI model trained on data generated with GUI-DR to study how synthetic data affect the model’s GUI grounding capability in supervised post-training.

GUI Perturbation — Research Series

GUI model cognitive behavior gaps

"Agent Skills," folders of instructions, scripts, and resources that agents can discover and use, have gained popularity as a way to make CUAs more capable [1]. The idea is appealing: give the agent better prompts and it will perform better.

But no amount of prompt scaffolding can fix the absence of fundamental behaviors the model never learned.

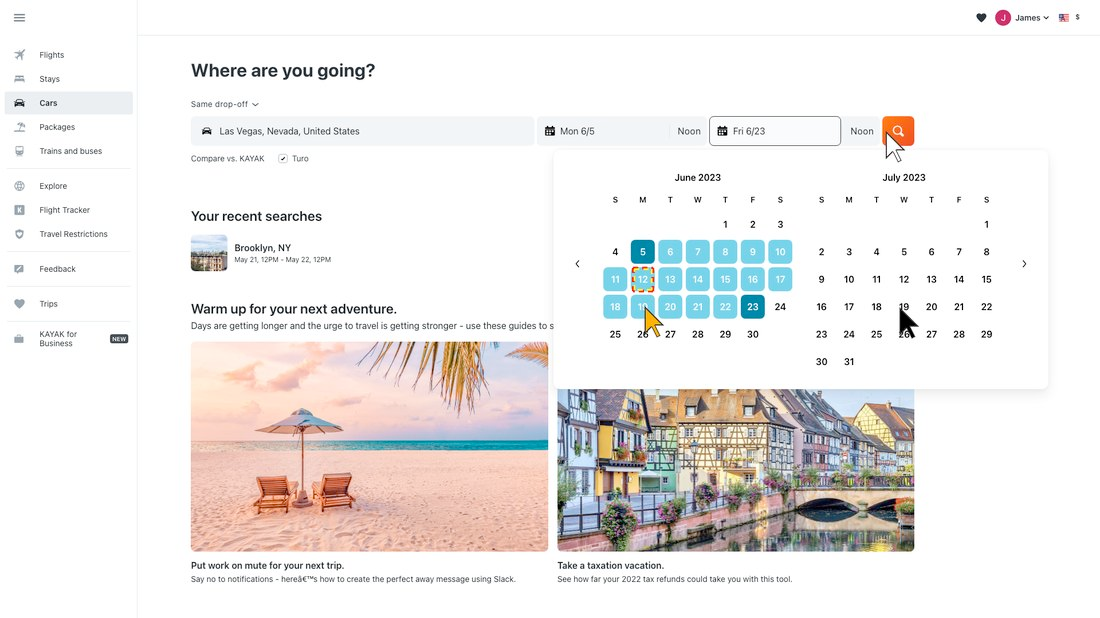

Consider the canonical example of booking a flight on your computer. Without spatial relation reasoning, the model cannot tell whether seat 14A or 14C is the window seat on a seat map. Without multi-region visual context understanding, it books a flight on May 21st instead of June 21st because it read the wrong date from a dense calendar view. Without instruction ambiguity reasoning, it books the first flight on the list without asking for clarification. Without self-reflection, it gets stuck in the wrong checkout flow and follows it all the way to the end. Without refutation behavior, it loops forever searching for a menu item that has been deprecated, or executes dangerous actions simply because the user prompted it.

These are training data problems, not prompting problems. CUAs need rich training data to learn the agentic cognitive behaviors required for common computer use tasks. In this post, we study whether we can train these behaviors into a model using GUI-Perturbed data, and find that naive approaches fail in instructive ways.

From evaluation gaps to training gaps

In Part 2, we found that state-of-the-art GUI models degrade sharply on spatial reasoning and visual perturbations, behaviors that are underrepresented in their training data. The models we evaluated had been trained on millions of GUI screenshots, yet they could not handle a zoom change or resolve "the button above X."

Existing CUA training recipes organize data by surface-level categories: platform type, action type, software application, UI element type [3-5, 7]. The goal is to maximize diversity along these axes. But as Part 2 showed, the gaps are not about platform or application coverage. They are about cognitive behavioral coverage: spatial reasoning, instruction disambiguation, visual appearance invariance, and more.

This raises a direct question: can we construct training data that fills these behavioral gaps? To begin answering it, we study the effect of synthetic GUI grounding data on a state-of-the-art model.

Why GUI training data is hard to get right

Collection is expensive, synthesis is fragile

Real trajectory collection at scale is prohibitively expensive. Efforts like OpenCUA [6] and the UI-TARS [2] data pipeline demonstrate what is possible, but the cost per trajectory remains high and the resulting datasets are still limited in behavioral diversity.

Synthetic data generation offers a potential alternative, but it has its own pitfalls. The Jedi approach illustrates the challenge: synthetic trajectories can look plausible while encoding shortcuts or artifacts that do not transfer to real usage. The final training data mix still require a large ratio of real world GUI screenshots [7].

| Synthetic Element | Synthetic Icon |

|---|---|

|

|

figure 3: Jedi dataset examples. Click on each image to enlarge.

The practical result is that practitioners reach for whatever data is available and hope that scale compensates for distribution mismatch. Our experiments below test whether this hope is justified.

LoRA as the practical post-training tool

Full fine-tuning is expensive for 7B+ parameter models. LoRA is the default post-training method for most practitioners because it is fast, memory-efficient, and easy to iterate on [8]. But LoRA's low-rank constraint interacts with GUI grounding in non-obvious ways [9]. The rank limits the capacity for representational shifts, and GUI spatial reasoning may require exactly the kind of deep visual-spatial realignment that low-rank updates struggle to express.

This is consistent with sensitivity findings reported in the DoRA [10], GLAD [11], and EvoCUA [12] literature, all of which observe that LoRA-based fine-tuning of vision-language models can degrade capabilities in unexpected ways.

What we mean by "agentic cognitive behaviors"

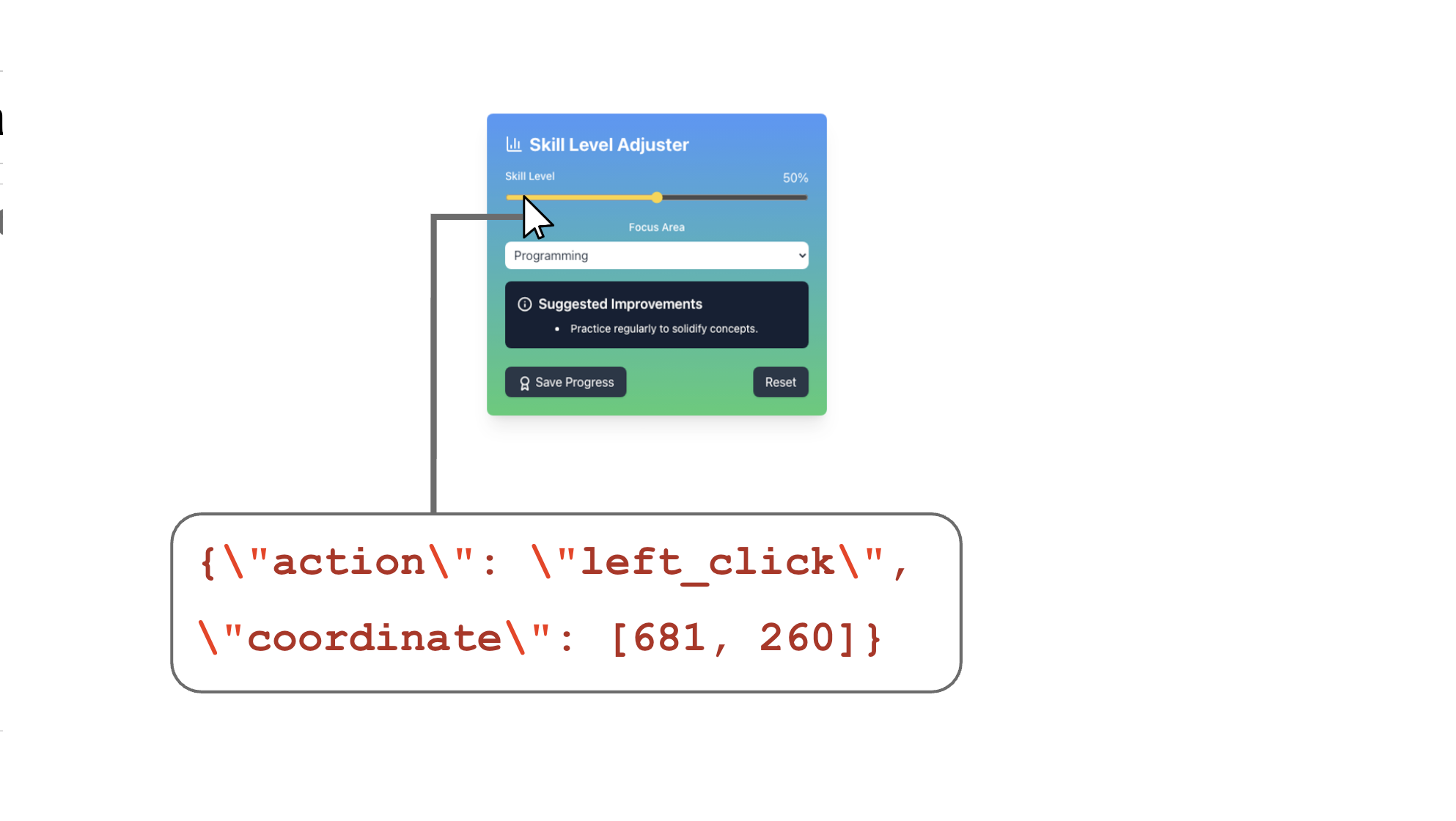

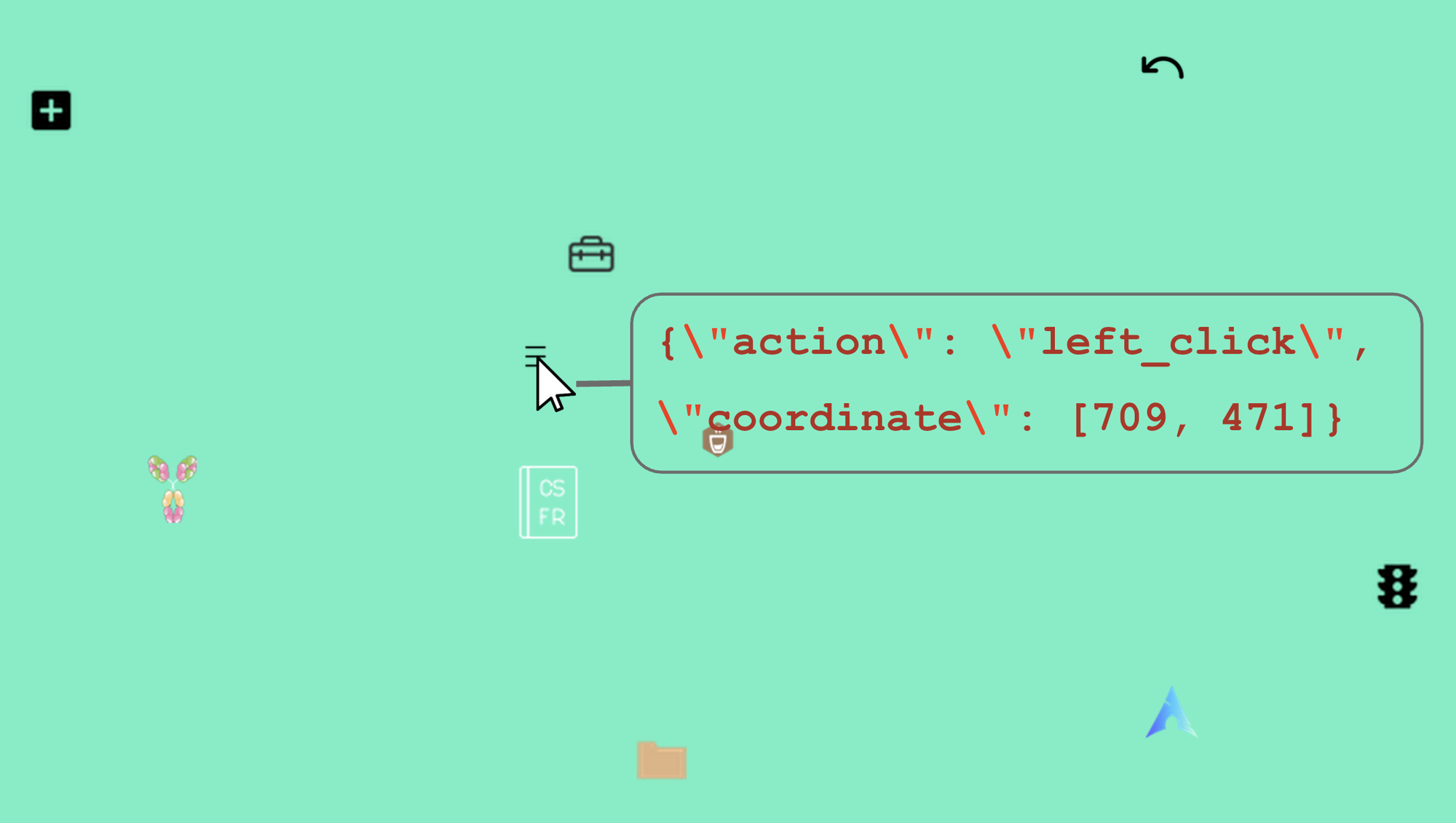

When we say a model lacks an agentic cognitive behavior, we mean something specific. It is not just about the action type (click, scroll, type). It is about the full distribution over the tuple (Instruction, Observation, Thought, Action): the model's reasoning and action primitives for interacting with the environment involving relational, functional, appearance characteristics of multiple visual regions, whether that environment is a static GUI screenshot or a live application.

The behaviors listed in the hook (spatial reasoning, self-reflection, refutation, instruction disambiguation) represent specific capability gaps that emerge from this distributional limitation. A model that has never encountered training examples requiring spatial relation reasoning will not develop that capability regardless of how many screenshots it has processed, because such capabilities depend on the dynamic interactions among multi-modal token streams across the instruction, reasoning trace, and visual input components of the training tuple, not on any single modality or component in isolation.

Experimental setup

Model: UI-TARS-1.5-7B with LoRA

We fine-tune UI-TARS-1.5-7B, the same model family evaluated in Part 2 [13]. This lets us directly connect evaluation findings to training interventions: the weaknesses we measured in Part 2 are the ones we attempt to address here.

We use a conservative LoRA configuration: rank 8, representing 0.042% of the model's trainable parameters. This is a conservative configuration that lets us test whether lightweight adaptation is sufficient for the representational shifts that GUI grounding requires.

Training data: two sources

We prepare two training datasets to compare synthetic perturbation data against real diverse data.

GUI-Perturbed training split. We generate training data from the Part 1 perturbation pipeline, applying GUI-DR to the Mind2Web [16] training set and filtering the results using Holo2-30B-A3B [15] (the ScreenSpot-Pro [17] state-of-the-art model at 66.1 accuracy). The training split consists of four perturbation types:

- style variant

- text shrink variant

- precision variant

- All combined (1 original + 5 style + 1 precision + 1 text shrink)

This produces 24,935 steps total (4,319 steps across 8 variants).

| Data Split | Variant Composition | Sample Size |

|---|---|---|

| 6.5k style | style | 6500 |

| 6.5k text shrink precision | text shrink + precision | 6500 |

| 6.5k all | style + text shrink + precision | 6500 |

| 25k all | style + text shrink + precision | 24935 |

Salesforce GUI Grounding mix. As a real-data baseline, we generate a 25k training split by uniformly sampling from the Salesforce GUI grounding dataset, which aggregates data from several open-source sources [14]:

| Source Dataset | License |

|---|---|

| Aria-UI | Apache License 2.0 |

| OmniAct | MIT License |

| Widget Caption | Creative Commons Attribution 4.0 |

| UI-Vision | MIT License |

| OS-Atlas | Apache License 2.0 |

This gives us a clean comparison: synthetic targeted data (GUI-Perturbed) versus real diverse data (Salesforce mix), at matched scale.

Evaluation: GUI-Perturbed vs ScreenSpot-v2

Both experiment 2 & 3 were evaluated on GUI-Perturbed and ScreenSpot-v2 [21].

Three experiments, three surprises

Experiment 1: Which perturbation types help?

We compare augmentation variants to understand which types of perturbation data are most useful for improving grounding. We train separate models on style-only perturbations, on text-shrink-and-precision perturbations, and on the full combined set.

The result is counterintuitive. All augmentations lead to slight degradation with text shrink precision only variant resulting in slightly more degradation on average as seen in figure 4. The most degradation (~3.3% with direct instruction and no reasoning) is seen on the text shrink variant in GUI-Perturbed eval set. One might expect text shrink perturbations to be the gentlest form of augmentation, changing text size and layout zoom level while preserving everything else. Instead, they produced the largest drop in grounding performance.

Experiment 2: Does more data help?

We scale the training set from 6.5k to 25k samples to test whether more perturbation data improves performance. The standard expectation is that more data helps, or at worst plateaus.

Scaling amplified the degradation as seen in figure 5 and 6. More data made the model worse, not better.

This contradicts standard scaling intuitions and points to two interacting problems. First, catastrophic forgetting: the distribution shift introduced by perturbed data compounds as the training set grows, pushing the model further from its original capabilities. Second, the conservative LoRA configuration memorizes noise from unrealistic perturbations instead of learning the invariances the perturbations were designed to teach. The low-rank constraint means the model has limited capacity for new representations, and it spends that capacity fitting artifacts rather than extracting generalizable patterns.

Experiment 3: Real data versus synthetic data

We compare the Salesforce GUI grounding mix (real, diverse, drawn from multiple open-source datasets) against GUI-Perturbed training data (synthetic, targeted at specific perturbation types). If the problem with synthetic data is distribution mismatch, real data should do better.

Neither helped as seen in figure 7 and 8. The conservative LoRA configuration proved insufficient for visual-spatial alignment regardless of data source. Real diverse data degraded the model differently than synthetic perturbations, but both degraded it.

The bottleneck is not the data source. It is the training recipe. LoRA at rank 8 cannot make the representational shifts that GUI spatial reasoning requires. The model needs to change how it relates visual patches to spatial semantics, and that change is deeper than what 0.042% of trainable parameters can express.

Discussion

GUI models are more sensitive to data distribution than data scale

The standard intuition in machine learning is that more diverse data leads to better generalization. What we observe with LoRA SFT on GUI grounding tasks is different: data scale and diversity matter less than distribution alignment with the target capability. Small amounts of misaligned data cause disproportionate degradation because the low-rank update has limited capacity and allocates it to fitting whatever signal is strongest in the training distribution, even if that signal is noise.

This has practical implications. Practitioners who collect or generate more data without carefully controlling its distributional properties may find that their models get worse, not better. Scale is not a substitute for alignment.

LoRA SFT is insufficient for visual-spatial alignment

GUI grounding requires shifting how the model relates visual patches to spatial semantics. This is a representational change at the level of the model's internal features, not a surface-level behavioral adjustment that LoRA can easily express. The findings are consistent with results from the DoRA paper on LoRA sensitivity, the analysis of fine-tuning representation shift for multimodal LLMs, and work on conditional mixture of LoRA approaches.

Cross-entropy loss alone may also be insufficient for grounding alignment. The loss optimizes next-token prediction over the action output, but it does not directly supervise the spatial reasoning that produces the correct action. A model can learn to produce plausible-looking coordinate outputs without improving its internal spatial representations.

Current benchmarks mask these dynamics

Perhaps the most concerning finding is that without perturbation-based evaluation, we would not have detected these degradation patterns. Models that score well on fixed-scene benchmarks can degrade under training interventions that are designed to help them. If we had evaluated only on standard benchmarks after our training experiments, we might have concluded that the training worked, or at least that it was harmless.

GUI-Perturbed as an evaluation tool is essential for honest measurement of training interventions. This reinforces the case we made in Part 1: perturbation-based data is not just useful for stress-testing models, it is necessary for understanding whether training is making progress on the capabilities that matter.

Scope and limitations

Training method coverage. We evaluate LoRA at a single rank configuration. Full fine-tuning, higher-rank LoRA, QLoRA [18], and RL-based post-training (such as GRPO [14]) are all plausible alternatives that may yield different results. Our findings apply to the conservative LoRA regime that most practitioners use, but they should not be read as a general claim about all post-training methods.

Data coverage. We compare two data sources at matched scale. Broader augmentation strategies, curriculum-based approaches, and combinations of real and synthetic data remain unexplored.

What's next

Behavior-driven data curation

Current CUA training data is organized by surface features: platform, application, element type. Our results suggest that what matters is cognitive behavioral coverage. We need training data organized around the capabilities it teaches: visual reasoning, error correction, refutation, clarification, spatial relation reasoning.

Many of these behaviors are dynamic. They evolve with software updates, vary across tasks, and differ between users. They emerge through interaction, not static annotation. Scaling behavioral coverage will likely require new approaches to data curation: paraphrasing instructions for diversity, auto-annotating experience based on user feedback, and building pipelines that ground training data in desired distributions over instruction, observation, and behavior.

Better post-training recipes

LoRA SFT with cross-entropy loss is not sufficient for grounding. Promising directions include multi-stage training combining SFT with RL (as explored in SpatialLadder [19] and GuirlVG [20]), higher-rank adaptation that gives the model more capacity for representational change, and process reward models that provide step-level supervision for grounding decisions rather than sequence-level loss.

Richer learning signals from environment state

Current GUI training operates on a simple mapping: (screenshot, instruction) produces an action. What is missing is a representation of the next state, the result of taking that action. Without next-state information, the model has no way to learn from the consequences of its actions during training.

Better computer state representations could unlock richer credit assignment and more efficient learning signals. This connects back to the domain randomization thesis from Part 1: just as robotic policies benefit from simulators that provide full state feedback, GUI agents could benefit from environment representations that go beyond static screenshots. Building those representations is a direction we are actively pursuing.

Conclusion

Across three posts, we built a perturbation dataset (Part 1), used it to expose systematic weaknesses in state-of-the-art models (Part 2), and attempted to fix those weaknesses through training (Part 3).

The training results are a negative result, but an informative one. Naive data augmentation with conservative fine-tuning does not close the behavioral gaps we identified. Style perturbations degrade rather than help. More data amplifies the problem. Real data and synthetic data both fail when the training recipe cannot support the representational changes the task requires.

The path forward requires rethinking both what training data covers and how models learn from it. On the data side, we need to move from surface-level diversity (more platforms, more applications) to behavioral diversity (more reasoning patterns, more failure recovery, more spatial understanding). On the training side, we need recipes that go beyond LoRA SFT: higher-capacity adaptation, reinforcement learning from grounding feedback, and learning signals that capture the consequences of actions rather than just the actions themselves.

Connection to Fig

These findings reinforce our core thesis: control intelligence cannot be bolted on through prompting or lightweight fine-tuning. It requires training data and training recipes designed from the ground up for systems that learn through interaction. GUI-Perturbed, as both dataset and benchmark, is a tool for measuring progress toward that goal. The negative results in this post tell us where the current paradigm falls short, and they point toward the research directions we are pursuing next.

References

[1] "Overview," Agent Skills. Accessed: Mar. 10, 2026.

[2] Y. Qin et al., "UI-TARS: Pioneering Automated GUI Interaction with Native Agents," arXiv.org. Accessed: Mar. 10, 2026.

[3] J. Mu et al., "GUI-360°: A Comprehensive Dataset and Benchmark for Computer-Using Agents," Nov. 10, 2025, arXiv. doi: 10.48550/arXiv.2511.04307.

[4] H. Li, J. Chen, J. Su, Y. Chen, Q. Li, and Z. Zhang, "AutoGUI: Scaling GUI Grounding with Automatic Functionality Annotations from LLMs," Jun. 07, 2025, arXiv. doi: 10.48550/arXiv.2502.01977.

[5] S. Nayak et al., "UI-Vision: A Desktop-centric GUI Benchmark for Visual Perception and Interaction," May 06, 2025, arXiv. doi: 10.48550/arXiv.2503.15661.

[6] X. Wang et al., "OpenCUA: Open Foundations for Computer-Use Agents," Oct. 04, 2025, arXiv. doi: 10.48550/arXiv.2508.09123.

[7] T. Xie et al., "Scaling Computer-Use Grounding via User Interface Decomposition and Synthesis," Oct. 24, 2025, arXiv. doi: 10.48550/arXiv.2505.13227.

[8] E. J. Hu et al., "LoRA: Low-Rank Adaptation of Large Language Models," Oct. 16, 2021, arXiv. doi: 10.48550/arXiv.2106.09685.

[9] G. Pantazopoulos and E. B. Özyiğit, "An Efficient Training Pipeline for Reasoning Graphical User Interface Agents," Nov. 14, 2025, arXiv. doi: 10.48550/arXiv.2511.08172.

[10] S.-Y. Liu et al., "DoRA: Weight-Decomposed Low-Rank Adaptation," Jul. 09, 2024, arXiv. doi: 10.48550/arXiv.2402.09353.

[11] Y. Peng, P. Wang, J. Liu, and S. Chen, "GLAD: Generalizable Tuning for Vision-Language Models," Jul. 17, 2025, arXiv. doi: 10.48550/arXiv.2507.13089.

[12] T. Xue et al., "EvoCUA: Evolving Computer Use Agents via Learning from Scalable Synthetic Experience," Jan. 23, 2026, arXiv. doi: 10.48550/arXiv.2601.15876.

[13] "ByteDance-Seed/UI-TARS-1.5-7B," Hugging Face. Accessed: Mar. 10, 2026.

[14] Y. Yang et al., "GTA1: GUI Test-time Scaling Agent," Oct. 03, 2025, arXiv. doi: 10.48550/arXiv.2507.05791.

[15] "Hcompany/Holo2-30B-A3B," Hugging Face. Accessed: Mar. 10, 2026.

[16] X. Deng et al., "Mind2Web: Towards a Generalist Agent for the Web," Dec. 09, 2023, arXiv. doi: 10.48550/arXiv.2306.06070.

[17] K. Li et al., "ScreenSpot-Pro: GUI Grounding for Professional High-Resolution Computer Use," Apr. 04, 2025, arXiv. doi: 10.48550/arXiv.2504.07981.

[18] T. Dettmers, A. Pagnoni, A. Holtzman, and L. Zettlemoyer, "QLoRA: Efficient Finetuning of Quantized LLMs," May 23, 2023, arXiv. doi: 10.48550/arXiv.2305.14314.

[19] H. Li et al., "SpatialLadder: Progressive Training for Spatial Reasoning in Vision-Language Models," Oct. 09, 2025, arXiv. doi: 10.48550/arXiv.2510.08531.

[20] W. Kang, B. Lei, G. Liu, C. Ding, and Y. Yan, "GuirlVG: Incentivize GUI Visual Grounding via Empirical Exploration on Reinforcement Learning," Aug. 06, 2025, arXiv. doi: 10.48550/arXiv.2508.04389.

[21] K. Cheng et al., “SeeClick: Harnessing GUI Grounding for Advanced Visual GUI Agents,” Feb. 23, 2024, arXiv: arXiv:2401.10935. doi: 10.48550/arXiv.2401.10935.

Citation

@online{training_on_gui_perturbed_technical_report_2026,

title = {Training on GUI-Perturbed: Why More Data Isn’t Enough},

author = {Wang, Yangyue and Mathur, Yash, and Zhou, Tony and Nyachhyon, Jinu and Guruprasad, Pranav and Sikka, Harsh},

year = {2026},

url = {https://blog.fig.inc/training-on-gui-perturbed-why-more-data-isnt-enough},

note = {Part 3: Finetuning Experiments}

}